Rethinking explainability of AI through design and shared understandings

Defined key principles for shared understanding, created thought-provoking designs to explore system dynamics, and published insights on fostering holistic approaches to AI development

OVERVIEW

As AI systems integrate into daily life, there’s a need to rethink how we design for shared understanding, as traditional AI explainability often neglects diverse stakeholder perspectives, focusing too much on technical details.

My masters thesis at the TU Delft focused on building a shared understanding of AI systems through design. I explored how multiple stakeholders could better understand the future of residential shared mobility. To do this I used speculative design and the Stack framework from FreedomLab to create a process that considered both social and technical implications.

In collaboration with the DCODE network and FreedomLab.

OUTPUT

- Defined criteria for shared understanding

- Designed speculative artifacts to surface system tensions

- Facilitated group discussions that bridged different perspectives

- Reflected on how combining speculative design with the stack could foster a deeper, more holistic approach to future AI systems.

The final report can be found here.

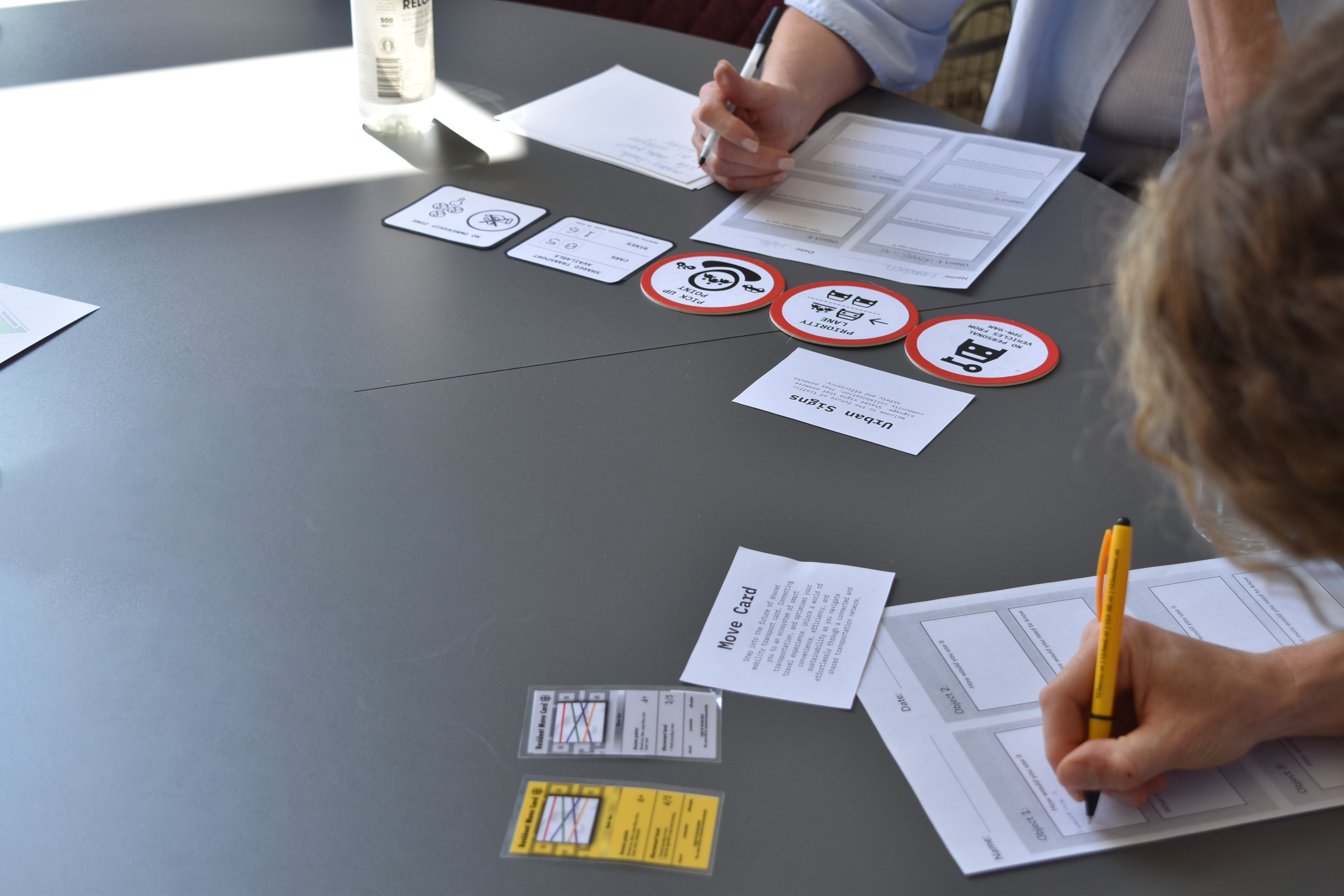

Photos from the multi-stakeholder sessions featuring designed artifacts of the future mobility system, including transport system cards, shared keys, and future road signs.

A screenshot from one of the sessions I hosted. The sessions included real estate developers, AI engineers, architects and policy officials.